ENGINEERING BLOG

OneDialect

A Unified Assistive Communication System

A fully implemented assistive communication system that translates speech, text, and tactile signals into a reliable bidirectional interaction loop through tightly integrated embedded and software layers.

- Embedded Systems

- C++

- Python

- Fusion 360

- Signal Processing

- Human-Machine Interface

- Wireless Systems

Problem Space

Communication systems for differently abled users were fragmented, each addressing isolated impairments. This created dependency on external assistance or required learning specialized communication methods.

A unified system was engineered to eliminate this fragmentation by enabling seamless translation across speech, text, and tactile communication channels within a single device.

System Architecture

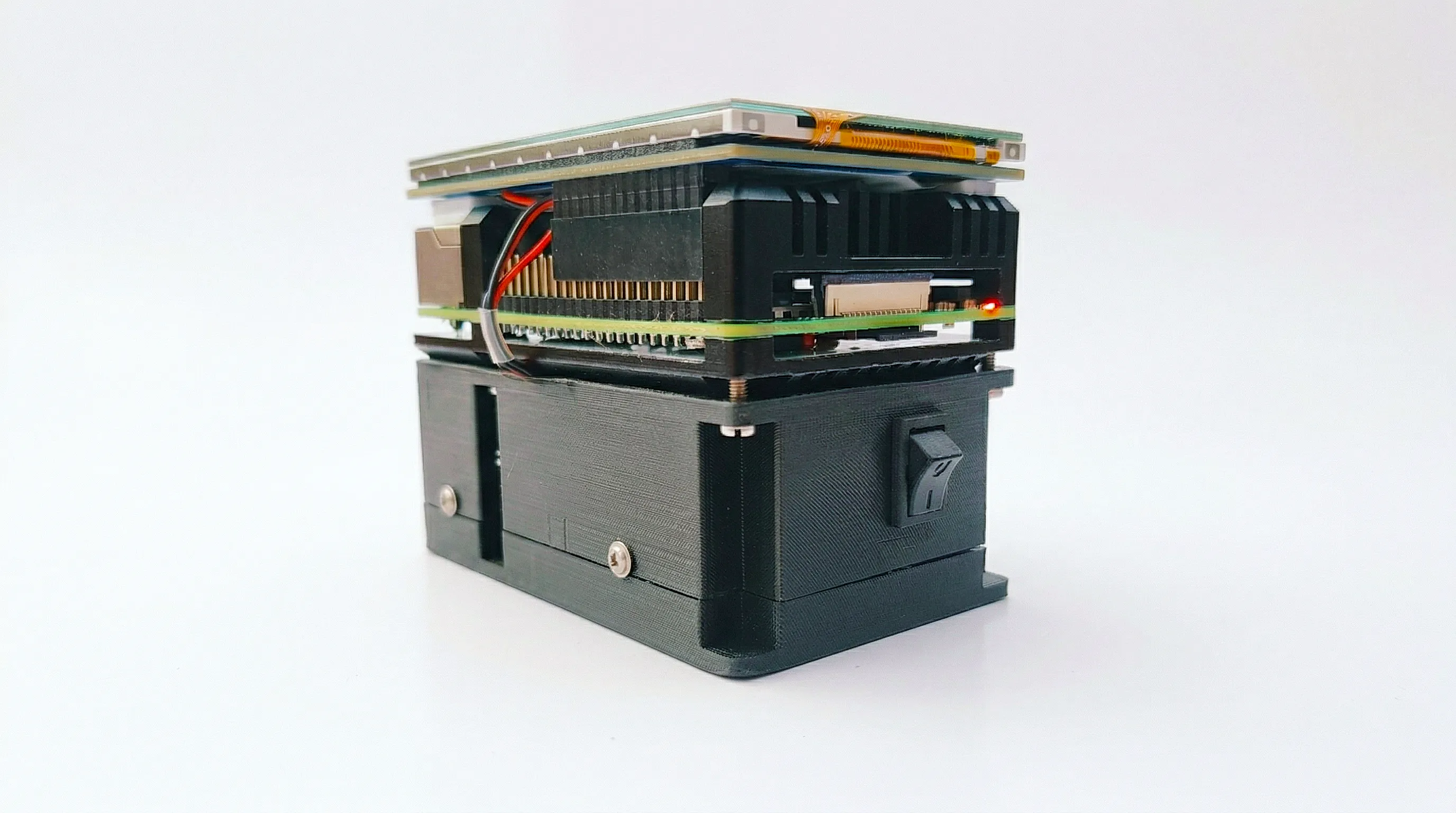

The system was implemented using a dual-layer architecture separating computation and real-time interaction:

- Processing Layer: Speech acquisition, preprocessing, text conversion, encoding

- Interaction Layer: Deterministic control via microcontroller

This separation ensured low-latency response and predictable behavior under all operating conditions.

Operational Flow

Speech input is captured, processed, and converted into text. The text is encoded into Morse patterns and transmitted wirelessly to a handheld unit, where it is rendered as vibration sequences.

Reverse communication is achieved through Morse input using a physical switch, which is decoded, converted into text, and synthesized into speech output, completing a full duplex interaction loop.

System reliability is defined by deterministic behavior under constrained conditions.

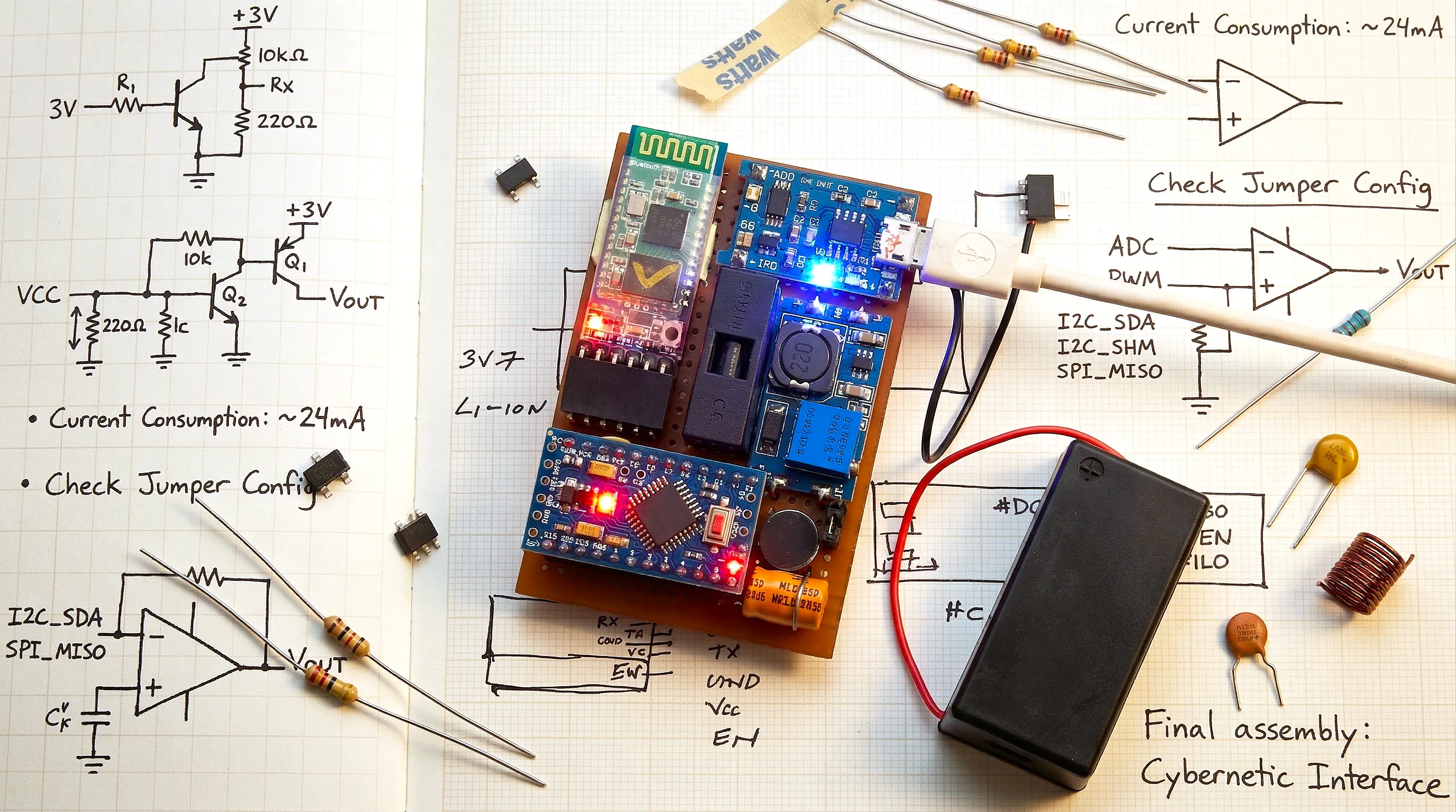

Embedded System Implementation

The interaction unit was implemented using the ATmega328P microcontroller, handling real-time signal processing and actuator control.

- Interrupt-driven Morse input decoding

- PWM-controlled vibration feedback

- UART-based Bluetooth communication

- Battery-optimized low-power operation

Hardware-level protections include overcharge, deep discharge, short circuit, and thermal safeguards.

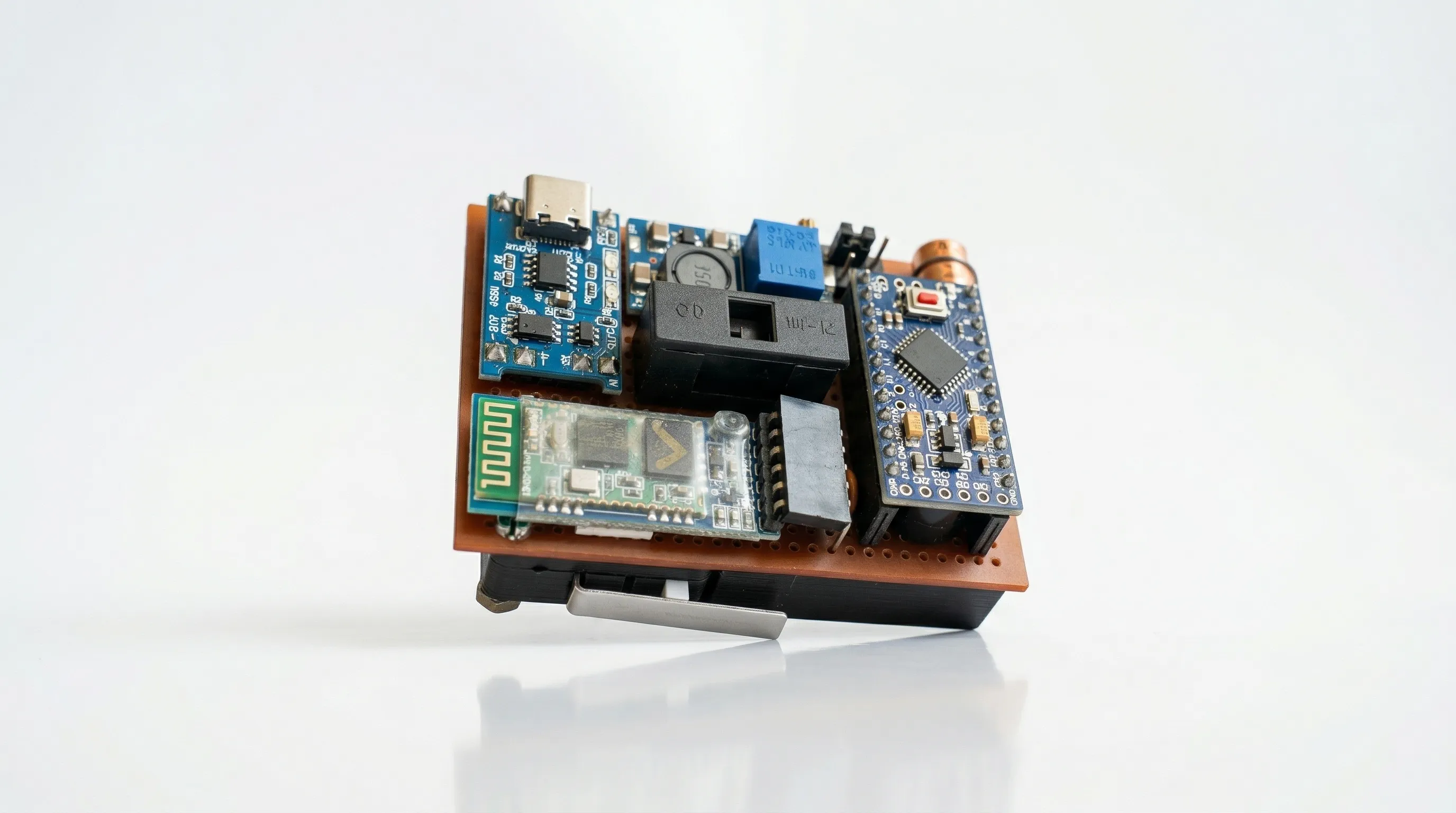

Hardware Integration

The system integrates multiple hardware modules into a stable embedded runtime:

- ATmega328P microcontroller

- Raspberry Pi 4 Model B

- Z-Axis haptic motor (Linear Resonant Actuator)

- HC-05 Bluetooth module

- Active buzzer (audio feedback)

- SPDT switch (input interface)

- Li-ion battery with protection circuitry

Software & Control Logic

Firmware was implemented using an event-driven architecture with state-based control:

- Input capture → decode → state transition

- Serial communication handling

- Output scheduling and actuation

Processing layer pipelines include:

- Speech-to-text conversion

- Text-to-Morse encoding

- Morse-to-text decoding

- Text-to-speech synthesis

Cost Engineering

The complete system was built at approximately ₹16,700, with the processing unit being the primary cost component. Component selection was optimized for reliability and affordability.

Validation

The system was fully implemented and tested under real usage conditions. The prototype demonstrated:

- Low-latency communication

- Accurate Morse encoding and decoding

- Stable wireless data transfer

- Consistent tactile and audio feedback

Future Scope

The architecture supports extension into:

- Multi-language processing

- Wearable form factors

- Emergency alert systems

- IoT-integrated assistive ecosystems

Outcome

A complete embedded communication system was designed, implemented, and validated. The solution demonstrates capability across hardware design, firmware engineering, and applied software systems with production-oriented constraints.